Menu

Yesterday I talked about wikipedia as a technology mediated form of collective intelligence. Today I want to broaden the subject into the question of artificial intelligence and decision making including some preliminary thoughts on the radical changes in identity and judgement brought on by the shift to the Anthropocene. All of this forms a key part of the next three Cynefin Centre Retreats which I will also discuss. Those are in Whistler in just over a weeks time, followed by Wales in November and Norway in January. Now I was meant to write this post a good month or so ago but a whole bunch of nonsensical and uncecessary crap has consumed too much of my time the last two months (one of these days I may write it up, but I’m not allowed to at the moment) so I’m late posting. So if anyone wants to make Whistler, or wants a combo deal for Whistler and Wales let me know. This post is also informed by the recent event in Singapore where the role of artificial ‘intelligence’ was one of the big topics on reimagining the future.

To be clear this is a major topic and its not possible to do more than raise some issues in a brief blog post. But the pace of change, and the lack of consideration of consequences in the wider population coupled with malicious intent and blind optimism mean that I (and others) place AI up there with nuclear war, biological engineering and global warming as existential threats to humanity. Obviously this is not all dire prognostications , there are many and various profoundly valuable and human uses of digitisation from simple navigation to improved diagnosis in medicine. My purpose here is not luddite in nature, AI is with us and its part of the rapidly evolving present and denial is the last thing we need.

To illustrate my concern I’ll put up three sound bites from my summaries at the Singapore IRHASS event. Please emember these were designed or came to me as rhetorical devices rather than considered academic statements, but I stand by them anyway!

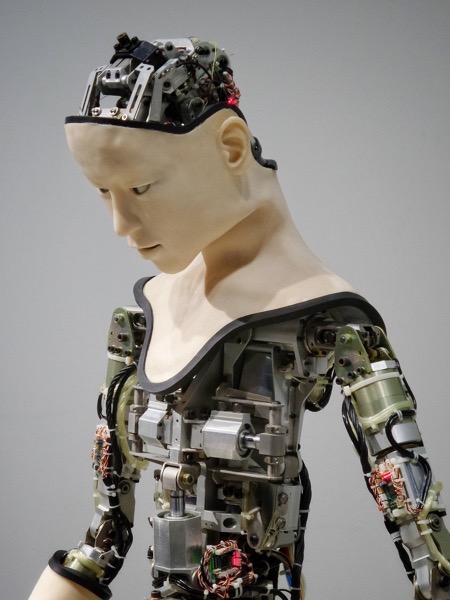

Then of course we have the nature of AI; its a type of swarm intelligence with algorithms responding to stimuli, what in complexity theory I would call an unbuffered feedback loop. Remember the computer trading algorithms that resulted in the financial melt down? A swam has no intention, no governing ethic or purpose it just acts, and evolves. A very basic reading of science fiction in recent years should be enough to make you realise the danger of allowing algorithmically based decision tools to run unchecked. In modern warfare its obvious, but the issue relates to many other fields including AI based manipulation of the internet which is now capable or targeting individual mental breakdown. Remember the history of humanity is about warfare and that is the basis of any training AI will receive, add to that the fact that a generation of gamers are the main practitioners and you see my point.

From my perspective we need to start paying attention to the wider issues of human judgement and decision making, when it can be augmented by machine intelligence, when or if it should be replaced. With that we also get the wider issue of the role of ethics and aesthetics in making us human in the first place. We will start that investigation at the coming Whistler retreat and we have a pretty stellar cast to assist in the process. That will then roll over into Wales and Norway to conclude the series by early in 2020 – each event stands alone but we already have some people booking on all.

That means a public discourse, informed by threat and opportunity but it also requires us to rethink education and that is something companies and organisations can address in the short term. I was recently commissioned to write an article on ethics for a book edited my Mark Smalley in the new ITIL series called High Velocity IT. He greatly improved my conclusion which I repeat below:

Ethics is a much-neglected aspect of engineering education on which the promise, opportunity and threat of modern technology depends. It’s not enough to provide post-core training: it must be part of the education that precedes it. Measuring and monitoring attitudes related to ethical behaviour is as important as rule creation and compliance management. Just as a company monitors its cash flow, so it should monitor the flow of virtue within its employees and networks.

I would now add to that ensure than you measure and reflect on cognitive, behavioural and cultural diversity in your organisation and that is one of the next our Powered by SenseMaker® Pulses which are currently being launched.

More this week on the wider questions of the wider questions of polity and ethics in the anthrpocene where I want to discuss some of the wider issues we will address in the Welsh retreat later this year – book now, that one has limited places given the location.

Robot/Android picture by Franck V. on Unsplash

Picture of bees is by Annie Spratt on Unsplash

Cognitive Edge Ltd. & Cognitive Edge Pte. trading as The Cynefin Company and The Cynefin Centre.

© COPYRIGHT 2024

Today is the 13th anniversary of my starting to edit Wikipedia. I’m now up to ...

Over the last three weeks I’ve been engaged in a diverse set of projects. Firstly ...