Menu

Plans for a series of key blog posts after my March update series on Cynefin have been disrupted by COVID-19 and the need to both manage the situation within Cognitive Edge and the Cynefin Centre, but more importantly, create a range of offerings to provide capability around the pandemic crisis. One of the main points I have made is the need to focus on learning and innovation in parallel with crisis management processes. We’ve held a webinar on the crisis and my thanks to the faculty who volunteered at short notice in response to my email, to Sonja for chairing the event (and reflecting on it) and to Dave Sharrock for suggesting the idea in the first place. Registrations went over 2000 which has never happened before. In parallel with that one of the big consultancy companies organised something similar (and with much bigger mailing lists) but managed less than 500. I think a partial reason for that was the general pandemic triggered the realisation that the standard practices of the last few decades (to quote Lincoln in an address to Congress on the 1st December 1862 during the Civil War)) are inadequate to the stormy present and to continue that quote we in consequence, to continue the quote: As our case is new, so we must think anew, and act anew. Complexity, the science of uncertainty allows us insight into reality; anthro-complexity, the science of human complex adaptive systems, allows us to co-create new realities out of the crisis. Expect to see a lot more here and in the handbook, on Chaos and Complexity we hope to publish with the EU Policy Lab this week. That is divided into a deeply pragmatic field book and a more substantial theory piece. Most of the material for these lies in posts on this blog over the years but there are a few gaps that need filling and this is one of them.

Plans for a series of key blog posts after my March update series on Cynefin have been disrupted by COVID-19 and the need to both manage the situation within Cognitive Edge and the Cynefin Centre, but more importantly, create a range of offerings to provide capability around the pandemic crisis. One of the main points I have made is the need to focus on learning and innovation in parallel with crisis management processes. We’ve held a webinar on the crisis and my thanks to the faculty who volunteered at short notice in response to my email, to Sonja for chairing the event (and reflecting on it) and to Dave Sharrock for suggesting the idea in the first place. Registrations went over 2000 which has never happened before. In parallel with that one of the big consultancy companies organised something similar (and with much bigger mailing lists) but managed less than 500. I think a partial reason for that was the general pandemic triggered the realisation that the standard practices of the last few decades (to quote Lincoln in an address to Congress on the 1st December 1862 during the Civil War)) are inadequate to the stormy present and to continue that quote we in consequence, to continue the quote: As our case is new, so we must think anew, and act anew. Complexity, the science of uncertainty allows us insight into reality; anthro-complexity, the science of human complex adaptive systems, allows us to co-create new realities out of the crisis. Expect to see a lot more here and in the handbook, on Chaos and Complexity we hope to publish with the EU Policy Lab this week. That is divided into a deeply pragmatic field book and a more substantial theory piece. Most of the material for these lies in posts on this blog over the years but there are a few gaps that need filling and this is one of them.

The focus of any complexity-based approach to crisis management is on rapid assessment of where you are and what pathways are open. Part of the problem in doing that, aside from the politics of blame avoidance and selfish exploitation, is that our ways of seeing and understanding things are largely based on what has worked in the past unless and until we are shocked out of it. While that shock comes quickly in so-called primitive societies, modern organisational theory and design, and the excessive delegation of solutions to consultants means that there is a higher than should be the case level of immunisation in most organisations. Slow to admit the crisis then in danger of only doing what is proven. Some people call this cognitive bias but as Gary Klein has pointed out evolution doesn’t throw adaptations that have no utility and the so-called biases are actually a form of evolutionary heuristics that reduce the energy cost of decision-making in the majority of cases. So at any time, but particularly in a crisis, we need to deploy human sensor networks that are culturally, experientially, geographically and ideally temporally diverse in both situational assessment and scenario planning. More on that tomorrow, but a key part of doing that at scale and in real-time is the distributed ethnographic approach that we developed with SenseMaker®. For an appreciation of its use in risk scroll through this report and you will find material from page 77 onwards.

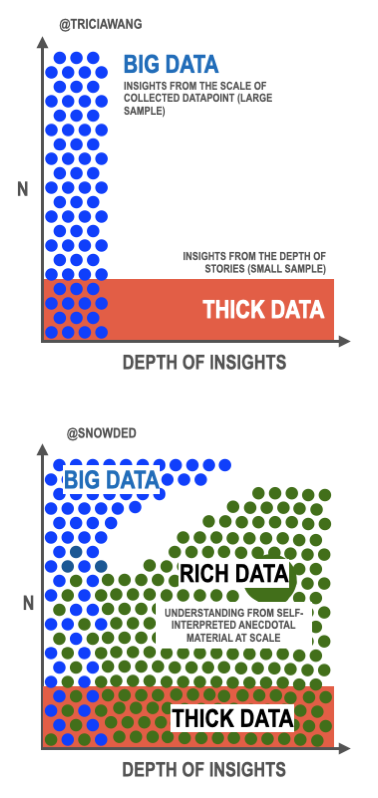

Now when you talk to people about mass distributed sense-making you often hit the Big Data argument. Give me a bright mathematician, technology tools and lots of data and I create meaning without effort. This approach has been extensively criticised by experts in various domains given the basic uncertainties around assumptions and the active danger of confusing correlation with causation. This article, in the context of COVID-19, is well worth a read. But given all of that, there are clearly massive opportunities here but we need to be cautious. Tricia Wang, in a medium article that triggered this post, argues for thick data to complement big data and the first of the two illustrations above is from that article. To quote:

Lacking the conceptual words to quickly position the value of ethnographic work in the context of Big Data, I have begun, over the last year, to employ the term Thick Data (with a nod to Clifford Geertz!) to advocate for integrative approaches to research. Thick Data is data brought to light using qualitative, ethnographic research methods that uncover people’s emotions, stories, and models of their world. It’s the sticky stuff that’s difficult to quantify. It comes to us in the form of a small sample size and in return we get an incredible depth of meanings and stories. Thick Data is the opposite of Big Data, which is quantitative data at a large scale that involves new technologies around capturing, storing, and analyzing. For Big Data to be analyzable, it must use normalizing, standardizing, defining, clustering, all processes that strips the the data set of context, meaning, and stories. Thick Data can rescue Big Data from the context-loss that comes with the processes of making it usable.

Now I have no disagreement with this and it is a neat summary. But the issue with ethnographic work is that it takes time and is heavily dependent on the integrity of the ethnographer (if you don’t know about the issues here go and look at the way the American Anthropological Society treats its members who work with the military on human terrain mapping) and as importantly the willingness of the decision-maker to accept what they say. Too many decision-makers have commissioned too many qualitative studies over the years not to know how the way they are set up can determine the outcome. The other issue involves another quote:

There’s a big difference between anecdotes and stories, however. Anecdotes are casually gathered stories that are casually shared. Within a research context, stories are intentionally gathered and systematically sampled, shared, debriefed, and analyzed, which produces insights (analysis in academia). Great insights inspire design, strategy, and innovation.

This is true, but the act of stimulation itself can bias the results, especially in a setting where the person asking the questions is seen as having authority, which despite their best endeavours is likely to be the case. The problem is that it is the anecdotal data, the things that we casually share, that are most likely to represent what we think and the basis for action.

So this was the dilemma facing me over a decade ago in our DARPA projects and the solution we came up with, described on the SenseMaker® site, is to make people ethnographers to their own anecdotal data either as direct interpreters or as agents of capture proximate to, and a part of the culture. In effect that is a quantitative approach in what is traditionally a qualitative domain and can provide real-time results from highly distributed populations. So when I saw the diagram above I saw the opportunity for a both/and/and post. Tricia is arguing for thick data to complement big data and I am adding to that the use of rich data to link the two. So I created the second of the two diagrams – meaning and scale. In another minor change, I suggest that big data is more likely to gain meaning at higher volumes.

The other key aspect of rich data is that people are empowered to interpret their own anecdotal material; it is not delegated to an algorithm or an anthropologist. Another phrase becoming current here is epistemic justice: allowing people their own voice not just as a passive contributor of stories or anecdotes but as an active agent in their interpretation. And of course, the interpretative frameworks are derived from the detailed work of thick data practitioners and all three types of data need to be entangled at need to create meaning and support for effective decision making. More on entanglement in a future post but real-time high volume data, with meaning, is critical in normal types, but also hypercritical in a crisis.

And apologies for the title, I couldn’t resist it …

Banner picture by Gerry Cherry on Unsplash

Cognitive Edge Ltd. & Cognitive Edge Pte. trading as The Cynefin Company and The Cynefin Centre.

© COPYRIGHT 2024

In 1990, from a distance of more than 6 billion kilometres away, the Voyager 1 ...

Some time ago while watching one of Iolo Williams reflections on the Welsh Landscape I ...